Without Form and Void: Learning the Residual of Bioprocess Scale

We argue that encoding Galileo's Similarity Theories as ML inductive biases is the only way to transform bioprocess scaling from alchemical art into a predictable engineering practice.

Act I - The Venetian Arsenal

Every swinging church bell and right-angled buttress beckoned to them with clues about the nature of reality.

Early Morning

The cool, morning air was thick with the evergreen scent of pitch. Hundreds of skilled hands slathered the tarry pine-resin onto ships’ hulls in layers, their motions fine-tuned through generational labor.

Thick cords of handmade rope groaned to keep the galleys tethered to the shipyard, adding to the din of caulking mallet strikes and impassioned bartering taking place in the world’s most preeminent proto-factory—the Venetian Arsenal.

Perched atop a nearby turret, three cloaked men surveyed the Arsenal’s pre-daybreak bustle. Salviati, the trio’s wisest, noted that the master builders launched the largest ships to sea using oversized struts, ones with proportions vastly exaggerated from the shores used on smaller Galiots.

The builders offered no explanation for this. It was simply what their predecessors did and what experience had taught them.

Sagredo—the intelligent layman—noticed this and began focusing the group’s conversation on the matter. “Now, all reasonings about mechanics have their foundations in geometry,” he asserted, puzzled why scaling up the proven design didn’t confer the same structural integrity.

Salviati paused before citing numerous examples of both natural and manmade objects whose small and larger versions were structurally proportional, but whose physical properties were not. “If a scantling can bear the weight of ten scantlings, a similar beam will by no means be able to bear the weight of ten like beams,” Salviati said, suggesting that mere geometric similarity was insufficient to extrapolate the strength of small scantlings to larger beams.

Nature, he added, had long since arrived at this conclusion on its own—the bones of large animals were not simply scaled-up versions of smaller ones, but rather disproportionately thickened.

Realizing this, Sagredo internalized that his first, simple hypothesis—that scaling the dimensions of a structure by some factor would result in its other properties growing proportionately—was incorrect, violated by Salviati’s empiricism.

Simplicio—one whose imagination was stilled by his deference to Aristotelian orthodoxy—felt uneasy at the lack of a resolution. It was alluring to believe geometry offered a universal tool for scaling, a reliable bridge between small and large.

But it was not so.

He brooded to himself as the trio abandoned their post, eager to go hunting for answers amongst the crowd.

Late Morning

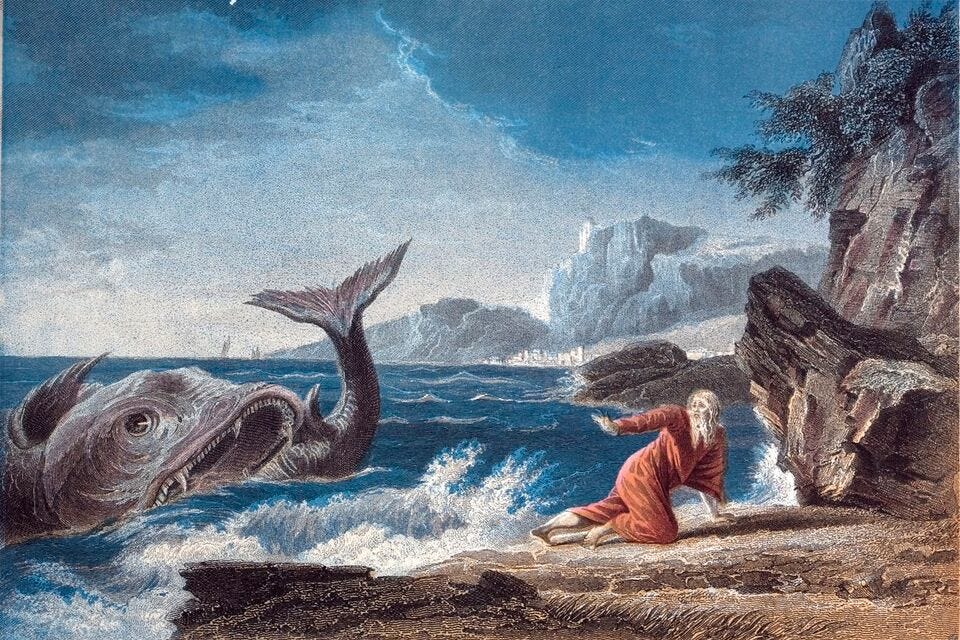

Like the great whale of Nineveh, the Arsenal swallowed them whole.

The abstract discussion on proportionality, so cleanly debated from the turret, bore down on the trio. Every swinging church bell and right-angled buttress beckoned to them with clues about the nature of reality.

Sagredo’s gaze floated upwards along a rope suspended from a towering crane. It swayed gently in the morning breeze, tracing out a slow, stately arc. He turned over one of Salviati’s claims, that pendulum oscillations were related to one another by a law of correspondence regarding the square roots of their lengths.

Rummaging through his cloak, Sagredo proclaimed, “I can find the length of the [rope] from the numbers of vibrations of these two pendulums during the same period of time.”

He produced a short cord measuring one braccio, give or take, and affixed a weight at one end before letting it swing.

Sagredo pressed Simplicio into service, having him tally the small cord’s frantic arcs while he himself tracked the larger’s patient movements. After five swings, he looked over at Simplicio who had counted sixty.

He squared five and sixty, giving twenty-five and 3,600. Sagredo paused to compute the final ratio. “So, 144 braccia is the length of the [rope],” he said. Salviati nodded in approval, pleased at the application of his theorem.

The trio carried on, disappearing altogether into the crowd of arsenalotti, mostly content with their foray into homologous systems.

Something still clawed at Salviati. He doubted length was the sole determinant of a pendulum’s period of oscillation, but the full accounting escaped him.

It would remain a mystery for his lifetime.

Act II — The Rules Governing Similar Systems

Reynolds’ tabulations converged on a single dimensionless ratio, whose critical thresholds governed the dye-stream’s behavior. This was remarkable since analytical descriptions of turbulent flow didn’t exist. And they still don’t.

Galileo’s Similarity Theory

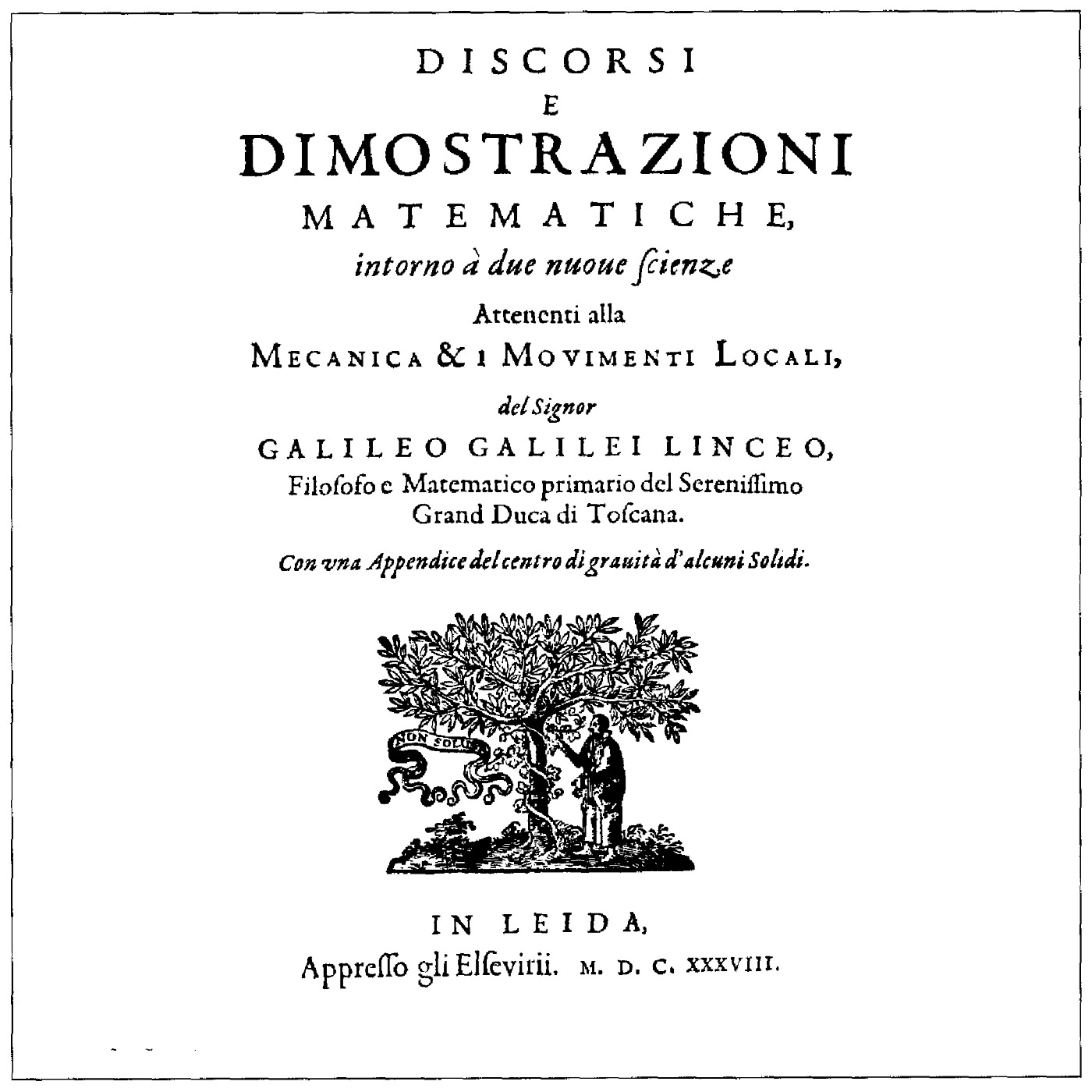

The characters of Sagredo, Salviati, and Simplicio served as Galileo’s sword and shield. By fracturing his Copernican worldview between three characters with different epistemic positions, Galileo had hoped to evade the fury of the Roman Inquisition.

Unfortunately, his veil of plausible deniability failed.

The Church summoned Galileo to Rome in 1633, after which he was sentenced to house arrest indefinitely. Condemned to Church supervision and in poor health, Galileo arranged for the publication of his final masterpiece featuring Sagredo, Salviati, and Simplicio—Discourses and Mathematical Demonstrations Relating to Two New Sciences (1638).

Amongst its myriad contributions to mechanics, Two New Sciences helped usher forward the notion of similar systems, one of the most powerful and far-reaching concepts in the physical sciences.

Similarity theory provides the scientific basis for modeling and formalizes what Sagredo did with the rigging rope. He inferred the behavior of a large system from observations made about a similar, smaller system.

Critically, Sagredo did so using a dimensionless ratio invariant to system scale.

Many contemporary engineering students are taught only narrowly about their domain’s use for dimensional reasoning. These ratios are memorized, used, and often forgotten in the derelict end-pages of textbooks.

I know because that was my experience too.

Problems with a Falling Film

Every chemical engineer remembers Bird, Stewart, and Lightfoot (BSL), the sacred text of the first bonafide course in the major—transport phenomena.

Our syllabus dealt primarily with fluid mechanics and how the properties of momentum, energy, and mass are exchanged between systems and across scales.

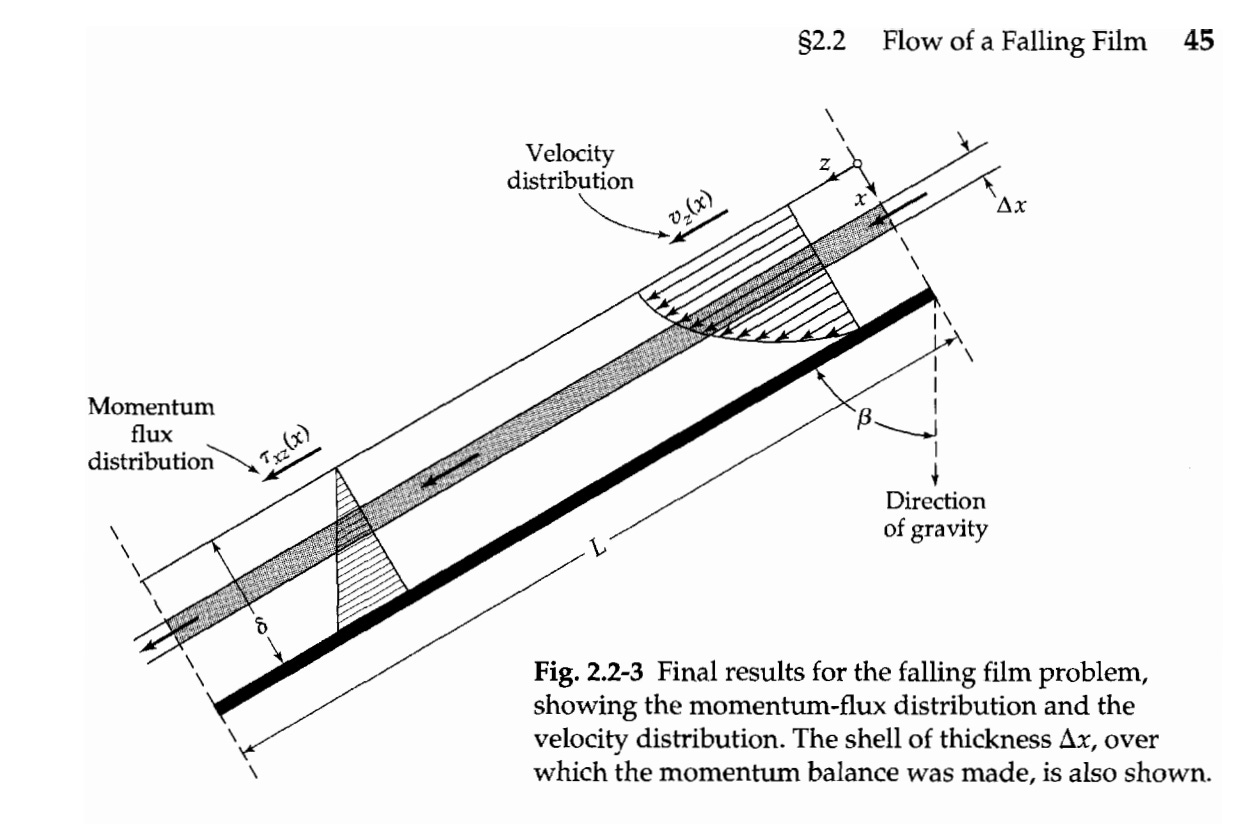

The professor wasted no time introducing us to dimensional analysis. Our sentinel textbook problem focused on a thin film of liquid flowing down an inclined plane under the influence of gravity.

After deriving equations for the film’s momentum flux and velocity distribution along the bulk axis, our classroom ran headfirst into a dimensionless quantity—the Reynolds number (Re).

The Reynolds number was presented unceremoniously as a simple tool to delineate so-called flow regimes.

Discouragingly, we learned all the equations we derived were valid only when the fluid flow was calm (i.e., laminar), or when Re was less then 20. When Re exceeded 1500, we encountered massively chaotic (i.e., turbulent) flow.

Our math stopped working. We would all try again in Transport 2.

The Violent Art of Scale

The Second Industrial Revolution (1870—1914) brute-forced similarity theory into existence.

Academic ideas like thermodynamics, fluid mechanics, and electromagnetism were ripped from lecture halls and forced to hold at industrial scale.

Generalize or be discarded.

Osborne Reynolds (1842—1912) embodied a new kind of figure that Britain’s maritime expansion demanded—the scientific engineer. One of the first UK professors to hold the title of Professor of Engineering, Reynolds brought the rigor and elegance of physics to bear on hydrodynamics’ most pressing problems.

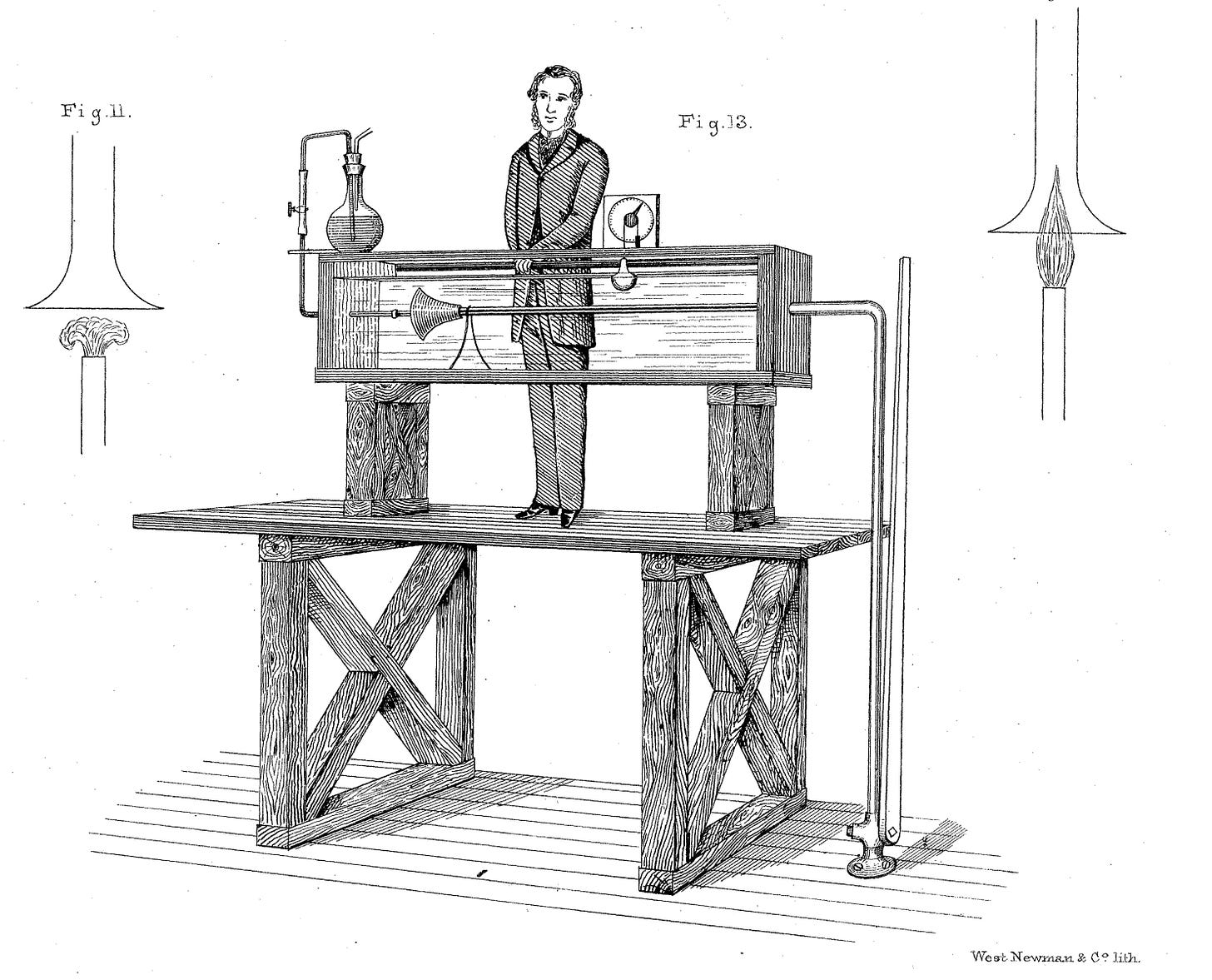

Reynolds devised his famous 1883 dye experiment at Owens College in Manchester. His aim, in part, was to resolve an anomaly that plagued civil and naval engineers.

Geometrically similar pipes containing identical fluids would sometimes snap between laminar and turbulent regimes as the flow rate varied. That similar systems could behave so divergently made industrial scale-up quite unpredictable.

Reynolds’ apparatus injected a thin line of colored dye into the center of a flowing stream of water housed inside a glass pipe. He hypothesized that the dye’s flow behavior would be governed by the relative ratio of multiple variables and not by absolute dimensions.

Reynolds searched across three parameters—fluid velocity, pipe diameter, and water temperature, which was his proxy for viscosity since it varied smoothly with room temperature.

Peering through the glass, he noted where along the pipe’s length that the dye-stream decayed into transitional eddies before it exploded into the surrounding water.

Reynolds’ tabulations converged on a single dimensionless ratio (ρUD/μ), whose critical thresholds governed the dye-stream’s behavior. This was remarkable since analytical descriptions of turbulent flow didn’t exist.

And they still don’t.

Yet similarity theory, in the form of Reynolds’ number, persisted where the exact mathematics couldn’t follow.

The epistemological framework built upon these variables diffused quickly, spreading across the globe and through time, eventually bleeding across the opening pages of my BSL textbook.

Act III — An Act of Applied Ontology

Every new dimensionless number after Reynolds was a ship’s lantern carried further into the mathematical dark. Each one burned with the same faith, that the unexplored seas ahead would resemble those at home.

Reynolds knew what he was asserting. His ratio was a declaration about which forces governed the system. The equipoise of laminar and turbulent flow resulted from the invisible contest between viscous and inertial forces, and no other force needed naming.

To invoke the Reynolds number is to stake a claim about what dominates a system’s behavior and what may be safely ignored. For Sagredo to infer the height of the rigging rope, he unknowingly accepted the pendulum’s dependence on gravity and inertia, but nothing else.

Dimensionless numbers are acts of applied ontology.

Every new dimensionless number after Reynolds was a ship’s lantern carried further into the mathematical dark. Each one burned with the same faith, that the unexplored seas ahead would resemble those at home.

That no hidden Leviathan waited in the void to quench the light’s piercing glow.

Reynolds’ lantern was remarkable. Engineers wielded it across the expanding frontier of technology, from sprawling water infrastructure to aerodynamics and beyond. For many years, no scaling endeavor revealed a lurking force that snuffed out Reynolds’ number.

But why was it so durable?

His number satisfied three conditions that sit quietly at the core of dimensional analysis. Each is a different facet of the same requirement—dimensionless numbers succeed when the systems they describe contain no hidden degrees of freedom.

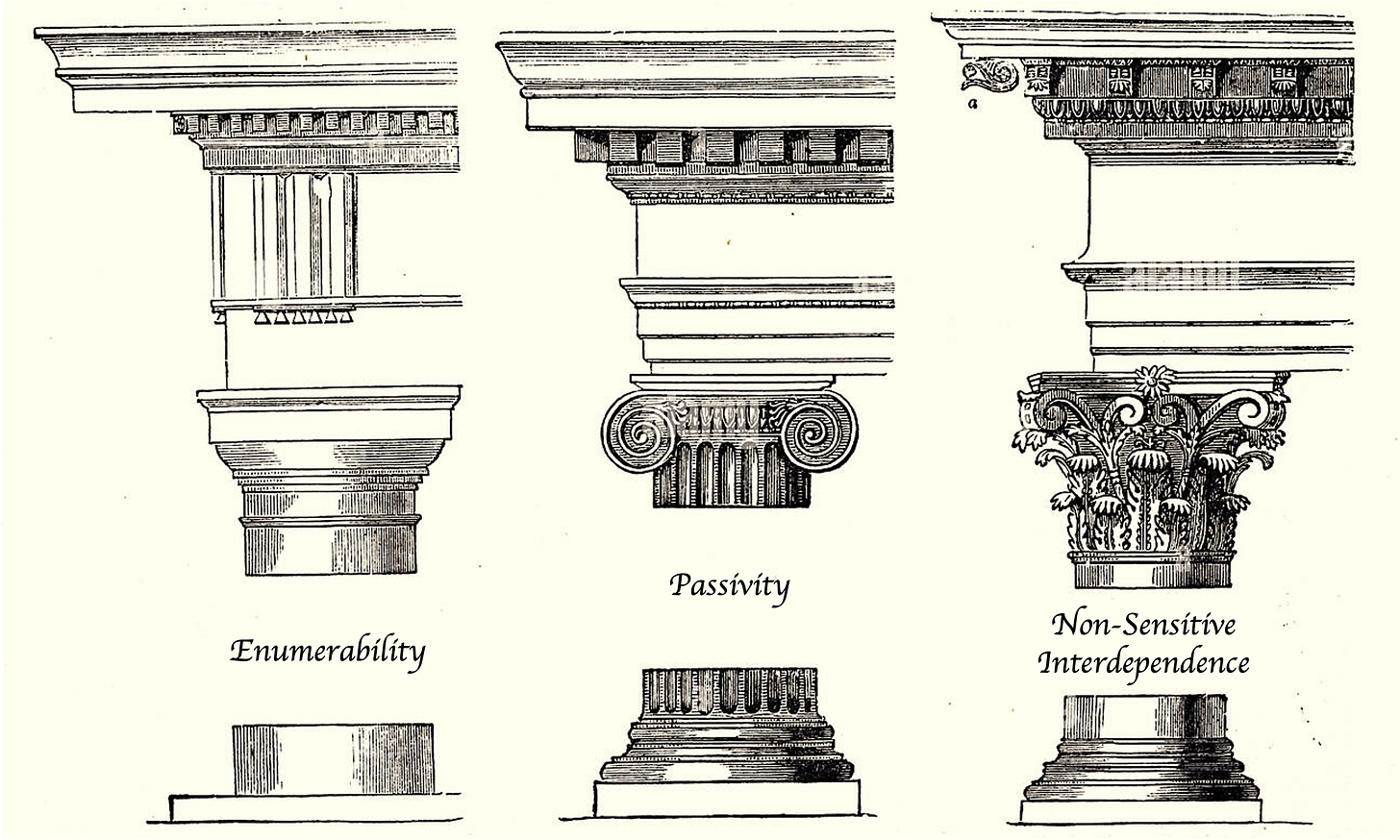

Enumerability — The system-relevant variables form a complete, finite, and knowable set that can be specified prior to analysis.

Passivity — These variables are inputs to the system, not outputs of its own behavior. The system being modeled doesn’t participate in setting the terms of its own description.

Non-Sensitive Interdependence — These variables interact robustly with each other. Small variable perturbations cannot spill over to others explosively.

Reynolds didn’t prove his variable set was complete, but he showed it was sufficient to collapse his data. Enumerability looks more like a confirmation by absence of contradiction, not proof of exhaustion.

Because nothing went catastrophically wrong, no one embarked on a hunt for more variables. But that’s the tricky thing about enumerability, dimensionless numbers don’t warn of their own incompleteness.

The water flowing through Reynolds’ system had no opinion about the pipe. Passivity is a hallmark of systems built up from dumb variables, ones without memory of or a reaction to their environments.

Enumerability and passivity are intertwined. A system that creates its own variables cannot be completely and knowably enumerated beforehand.

When Reynolds turned the dial on velocity, his ratio changed smoothly, even near the critical transition to turbulent flow. Non-sensitive interdependence requires that sets of variables move together predictably and that the function doesn’t spontaneously decay or explode.

The dawning years of the twentieth century would first formalize these conditions and then stretch them to destruction.

Act IV — Trials of Faith

The conditions held, but only because chemistry was simple enough to be extricated from the physics. This would be the last moment of repose.

A Codex in Two Parts

Dimensional analysis had anchored on every shore. Its use was everywhere, yet its mathematical justification was nowhere.

Edgar Buckingham, a research physicist at the National Bureau of Standards in Washington, D.C., desperately wanted a textbook on similarity theory. But none existed.

So he wrote one.

The result was two 1914 manuscripts on Physically Similar Systems. Buckingham’s Pi Theorem, which he credited to his predecessors, proved that any complete physical equation can be reduced to a smaller set of dimensionless groups—the Pi groups.

Buckingham’s derivations showed that two systems are physically similar when their Pi groups take the same value. Not when they look alike. Not when their measurements are the same.

But when their Pi ratios match.

Physical similarity, Buckingham professed, wasn’t a blanket property of systems. Instead, it only existed relative to a specific phenomenon—a specific Pi group. Systems could sometimes be similar through the lens of one property (e.g., drag), but not another (e.g., heat transfer).

These mathematical guarantees were conditional.

At the outset of the Pi derivation, Buckingham stated that dimensionless scaling requires that the variable set be completely enumerated. No hidden degrees of freedom.

“If none of the quantities involved in the relation has been overlooked, the [Pi] equation will give a complete description of the relation subsisting among the quantities represented in it, and will be a complete equation,” he asserted.

Hidden, or latent, variables are the most dangerous kind. They are often invisible within the experimental window because their values don’t vary enough to betray their existence.

For Reynolds, fluid viscosity may have been hidden from him, had he not already known it could be smoothly bridged to the room’s temperature.

Latent variables are our Leviathans lying in wait.

At the Edge of Separability

Reynolds, alongside the other pioneers of dimensional analysis, explored where matter flowed, heated, and moved, but not where it transformed. A natural next question was what would happen if the fluid, or system, reacted.

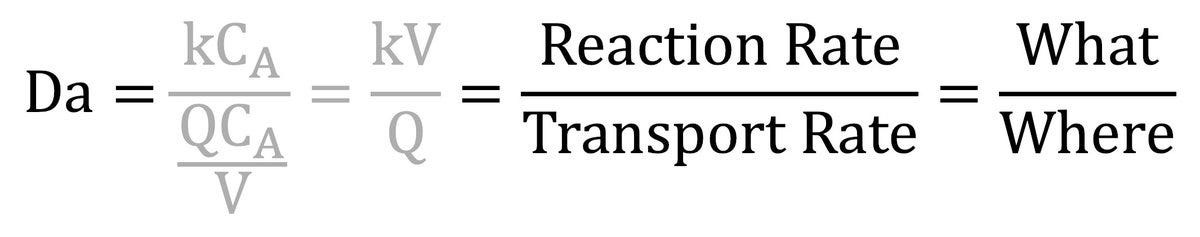

The German chemist Gerhard Damköhler (1908—1944) attempted this question, extending dimensional analysis into reactive systems with his number (Da), capturing the competition between reaction rates and fluid transport rates.

It told engineers whether a reactor’s output was limited by its chemistry or its mixing. For the first time, the conditions of physical similarity were being asked to hold across a new kind of boundary.

The assumption of separability between the What and the Where.

For simple, first-order chemical systems (A → B), the reaction rate is the product of an intrinsic rate constant and the concentration of species A. The What.

The transport rate describes the physical environment through terms for flow velocity, reactor volume, and diffusion coefficients. The Where.

The What and the Where are separable for dumb fluids, ones that do not know if they are in a test tube or a 50,000-liter (L) reaction vessel. The divisor line is a thin boundary separating two closed worlds.

Damköhler’s number worked for industrial chemical scaling because the reactive fluids still behaved passively. The rates were fully enumerable. Separability ensured that fluctuations of one force didn’t spill over catastrophically into the other.

The conditions held, but only because chemistry was simple enough to be extricated from the physics. This would be the last moment of repose.

Industrial scaling was entering a new era, one where our systems would reach out towards self-agency. They would sense and respond, writing their own rules with quiet fury.

The conditions would no longer be strained. They would break.

Act V — Piercing Through Firmament

They had no words to describe the bistable regulatory logic inside these cells. Nor did they understand that local bacteriophage outbreaks were silently wreaking havoc inside their reaction tanks. Passivity and enumerability were holding by a thread.

A Curious Pseudo-Catalyst

Early industrial engineers saw biological cells as pseudo-catalysts—passive agents that galvanized the conversion of inexpensive feedstocks into useful products. Citric acid was one of those products.

James Currie, a food chemist working for Pfizer around 1917, invented a process to produce citric acid using the fungus Aspergillus niger as a pseudo-catalyst. The pharma raced to scale Currie’s process, opening the first large-scale citric acid fermentation plant in 1926.

At first, the scaled process seemed robust—enumerable, passive, non-sensitive.

Despite adhering to Currie’s precise sugar and pH ratios, citric acid yields started to become erratic across facilities and batches. The process became unpredictable.

Eventually, Pfizer’s engineers discovered empirically that a metal cofactor, manganese, had to be below five parts per billion or citric acid production would collapse.

Trace manganese was a latent variable. It was invisible, initially, because it didn’t vary enough within a single facility’s water supply to betray its relevance.

The condition of enumerability had been strained enough to cause a small leak, but the patch was simple enough.

Plant workers scribbled in their second lab notebooks to keep manganese below the threshold. Currie’s process survived because it could be fixed through trial-and-error.

Perhaps no one realized that the industry teetered at the edge of an abyss, beyond which it would need to endure the latent fathoms below.

Born of War

At the height of World War I, the British were running out of bullets. Germany had cut off the Allies’ access to acetone, a key ingredient to make cordite—a munitions propellant.

Under wartime pressure, Manchester professor Chaim Weizmann (1874—1952) developed the ABE (acetone-butanol-ethanol) fermentation process using the aptly named bacterium Clostridium acetobutylicum.

The Admiralty raced to scale the process at the Royal Navy Cordite Factory, where they slapped together ranks of 7,000-gallon fermentation vessels.

It was a disaster.

Seven of the first ten batches failed. An ocean of wasted, bacteria-laden mash seeped across the grounds in Dorset.

The engineers didn’t know that their slipshod, serial passage technique caused strain degeneration, producing C. acetobutylicum that were permanently stuck in a non acetone-producing state.

They had no words to describe the bistable regulatory logic inside these cells. Nor did they understand that local bacteriophage outbreaks were silently wreaking havoc inside their reaction tanks. Passivity and enumerability were holding by a thread.

The construction of new facilities and an inventive heat-shock fix rescued the process. The acetone flowed, and with it, the war was won.

Act VI — An Infinite Regression

Every layer in the system is coupled to the ones above and below. These worlds within worlds harbor fractal-like phenomena that contort across orders of magnitude in time and space.

Something Was Now Different

Elsewhere, everywhere, another war raged. The one against human disease.

Diabetes was a death sentence throughout the lifetimes of Galileo, of Reynolds, and even of Buckingham. The antidote, insulin, was awarded the Nobel Prize in Medicine in 1923. But the pharmaceutical industry struggled to meet the surging demand.

Securing sufficient insulin required extracting pancreatic tissue from as many as 56 million animals per year, just in the United States.

A small startup called Genentech figured out how to get bacteria to produce human insulin, obviating the need to harvest it from animals. They teamed up with Eli Lilly, the dominant manufacturer of animal insulin, to scale the bioprocess using Escherichia coli.

Humulin, a 51-amino acid protein encoding human insulin, became the first recombinant drug approved for human use.

Then the industry turned to recombinant antibodies, gargantuan biomolecules more than 25 times larger than Humulin. These beasts were composed of multiple polypeptide chains and were decorated externally with a mosaic of post-translational modifications (PTMs).

Manufacturing such a complex product necessitated using an equally complex bio-factory. The cell chassis of yore were incompatible since they lacked the internal machinery to meet these product design specs.

Bioprocessing turned to its new workhorse—mammalian cells. Chinese hamster ovary (CHO) cells, in particular, became the antibody-producing substrate of choice.

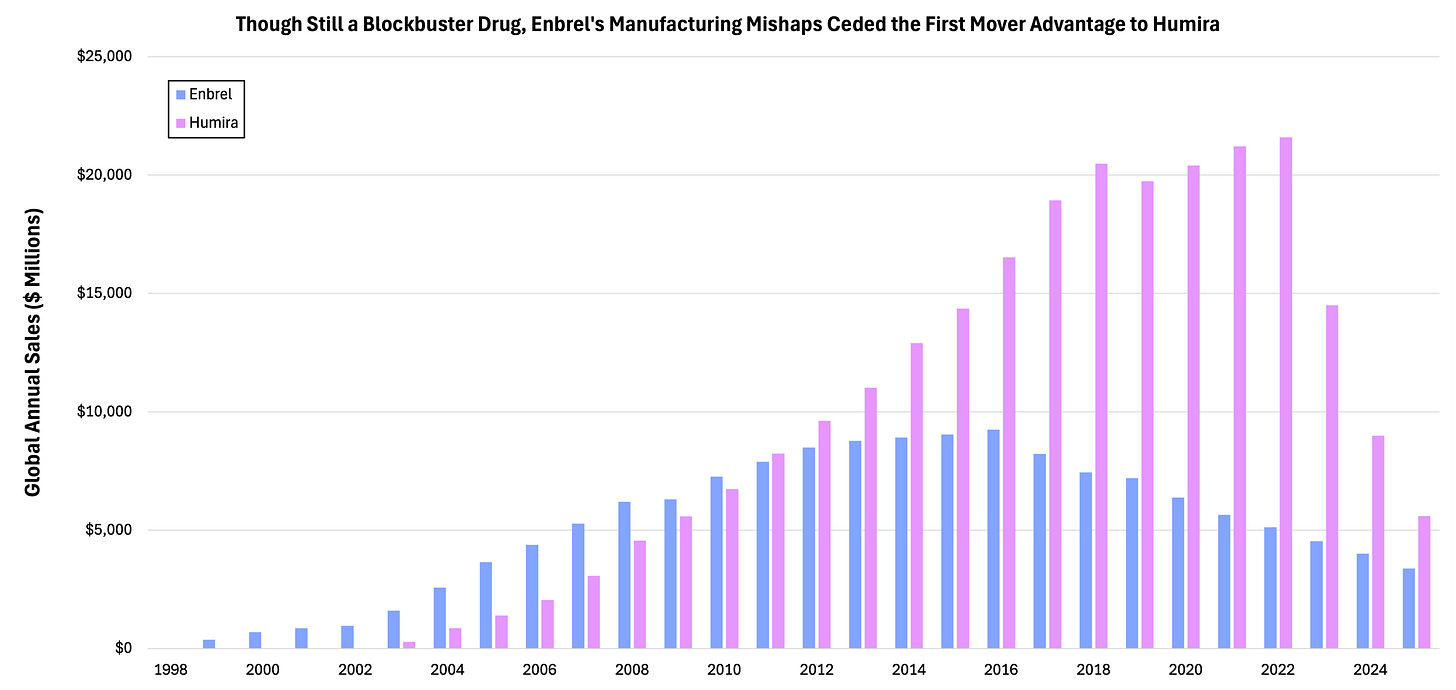

The FDA approved Enbrel (etanercept) in 1998 to treat moderate-to-severe rheumatoid arthritis. This antibody-like fusion protein was the first approved tumor necrosis factor (TNF) inhibitor, but there was another salvo of competitive agents not far behind. And demand was exploding.

Enbrel patent-holder Immunex charged forward with CHO manufacturing, even building a production annex in their parking lot to supplement their single production plant in Germany.

The inherent complexity in scaling bioprocesses compounded with the sluggishness of new facility buildout proved overwhelming for Immunex.

The pharma’s waitlist grew to 40,000 patients—nearly half everyone in the U.S. with rheumatoid arthritis. Even worse, two competitive TNF inhibitors were improved, with one even enrolling patients directly from Immunex’s waitlist.

The window of opportunity had shut. By 2010, Enbrel sales retrenched while Humira—AbbVie’s TNF molecule—grew to become the best-selling drug in history.

Even after Amgen’s ~$10 billion acquisition of Immunex, the duo struggled to ramp Enbrel’s production capacity, with one Rhode Island facility failing to meet the FDA’s minimum 60% batch success rate.

Something was now different.

Previous epochs and revolutions bore witness to successful scaling under similarity theory. Even under the existential threat of war. Even when the three conditions were stretched beyond limit.

A second notebook scribbled with trace metal heuristics. A new facility fitted with a heat-shock protocol. These ramshackle attempts to tame latent variables, to keep forces separable, to install a mirage of passivity—they held back then. They didn’t anymore.

Worlds Within Worlds

Scaling bioprocesses is incredibly challenging because of the complex interconnectedness between fluid transport phenomena and mammalian cell biology.

Compared to bacteria, CHO cells are fickle. They’re large, with membranes that are fragile under fluid shearing forces. They evolved in the context of a multicellular organism, so their regulatory systems are adept at processing subtle environmental signals.

Process engineers typically have mastery over the physical reaction vessel. Using computational fluid dynamics (CFD) simulations, they can tune impeller speed, gas flow rates, temperature, pH, and dissolved oxygen to match the required dimensionless groups across scales.

But the bulk fluid dynamics are just the start.

CHO cells don’t live in the bulk. They experience trajectories through a continuum of microenvironments inside the bulk. High dissolved oxygen near the sparger, low near the wall, high shear at the impeller, low in the dead zones, each of these serves as a spatiotemporal chapter in the lives of CHO cells.

Mammalian cell interiors are worlds barely known.

Networks of membrane-bound, receptor-antennas work as one, shuttling microenvironmental stimuli into the cell. A transient dip in hyper-local oxygen can trigger a CHO cell’s metabolism to state-shift, causing lactate to accumulate and for pH to drift. Even if oxygen is restored moments later, the cell product’s PTM pattern would be altered and cell viability may be affected days later.

Unlike Reynolds’ dumb fluids, mammalian cells are smart. CHO are like thermodynamic path variables. They retain a history of their past.

Every layer in the system is coupled to the ones above and below. These worlds within worlds harbor fractal-like phenomena that contort across orders of magnitude in time and space.

This is the infinite regression, without form and void.

Act VII — Learning the Residual

Hybrid modeling is not a panacea. It doesn’t fully restore the three conditions of similarity theory, but it does help bioprocess engineers work productively in their absence.

A Hybrid Theory

The Venetian arsenalotti’s instinct to use oversized ship-launching struts, Reynolds’ intuition to enumerate viscosity, and the Pfizer engineers’ second, fudge factor-laden notebook are one and the same. They represent the latent signal that exists in the gap between theory and practice.

Scaling similar systems beyond the frontier of the already-known has compelled a hybrid approach—the use of the universal, governing physics alongside the latent signal.

Bioprocess scaling, while incredibly complex, is yet another frontier reducible under similarity theory.

Hybrid modeling offers the best chance to transform bioprocess scaling from an alchemical art to a robust engineering practice. This approach wires together a partial, known-physics backbone to learned correction terms obtained from modeling the residual structure embedded in bioprocessing systems.

Hybrid modeling is entirely consistent with the history of similarity theory and, in scarce-data regimes like bioprocessing, may be the only way forward.

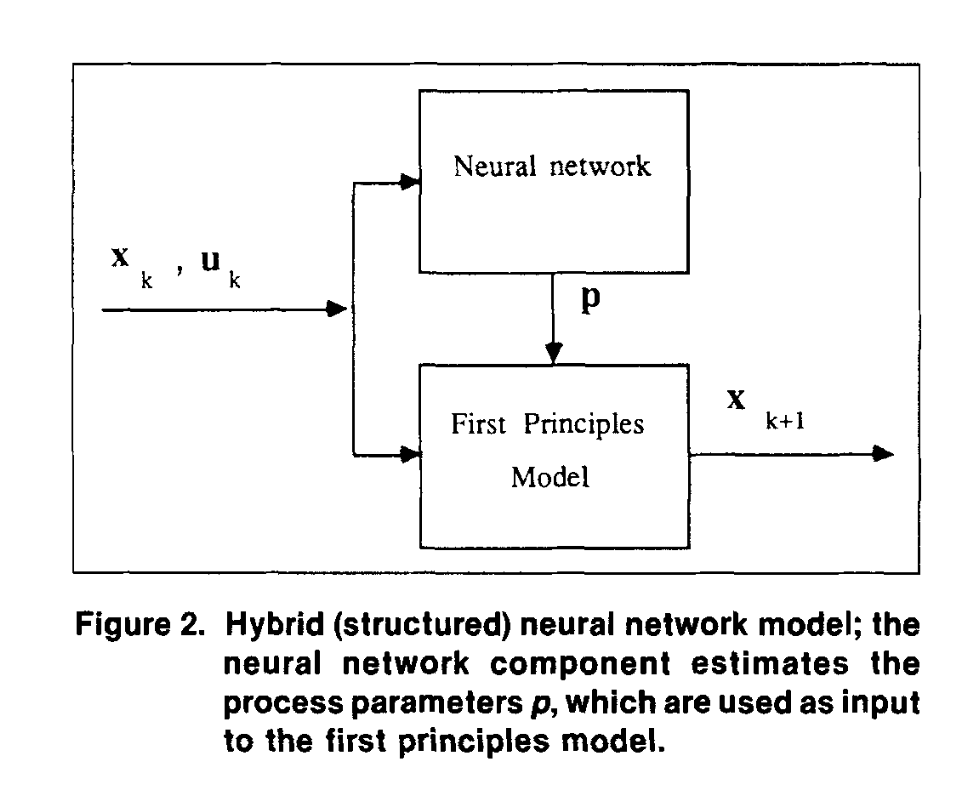

Two chemical engineers at Penn installed a neural estimator inside a first-principles, mass-balance model, breathing life into hybrid bioprocess modeling as early as 1992.

They focused the neural network on a latent, unmeasurable parameter—the system’s microbial growth rate. During training, output prediction errors were backpropagated through the physics module, creating a learnable signal with respect to the estimator.

The hybrid model achieved an order-of-magnitude lower prediction error using equivalent data as a non-physics-constrained comparator. Imposing the inductive bias also improved the hybrid approach’s learning rate by roughly 20x.

But as soon as these results diffused into industry, they were forgotten.

That era’s neural networks were too brittle to train and too slow to run. Bioprocess training data was too sparse and expensive to collect. Regulators didn’t have to language to audit black-box models within the biomanufacturing industry.

Meanwhile, brute-force parameter mapping frameworks like Design of Experiments (DoE) offered a legible, yet uninspired alternative doctrine.

But today is a different era.

Today’s AI accelerators are 100 million times more capable than 1990’s scientific workstations. Inline process analytical technology (PAT) sensors have turned bioreactors into veritable panopticons of data. Parallelized Ambr 250 bioreactor fleets can map parameter space in relatively high-throughput. Simulation technology for computational fluid dynamics (CFD) has exploded. Regulators are adept in the lexicon of machine learning.

The stage is set.

The Known Unknowns

When measured quantities and known physics are insufficient, hybrid modeling techniques can decipher CHO’s residual structure, transforming it into human-legible bioprocess improvements.

In a perfusion CHO culture, one team collapsed the heterogeneous ensemble of unmeasurable, cell-inhibitory metabolites into a single latent fudge-factor (Φb). The milieu of process-relevant junk became a known unknown.

Crucially, Φb wasn’t left floating. It was embedded inside a physical mass balance framework, constraining its dynamics within the bioreactor’s governing ordinary differential equations (ODEs).

The enumerated process variables and predicted Φb values formed an augmented feature space that a downstream neural network could leverage to predict a dynamic media exchange rate schedule.

The result was a 50% increase in volumetric productivity, which the team validated in a 5L perfusion bioreactor. Trained on data from a 250mL Ambr bioreactor, the hybrid model’s key parameters held across scales.

Modern hybrid models can learn more than latent state variables. They’re able to converge on multi-term kinetic rate laws that survive even broader leaps in scale.

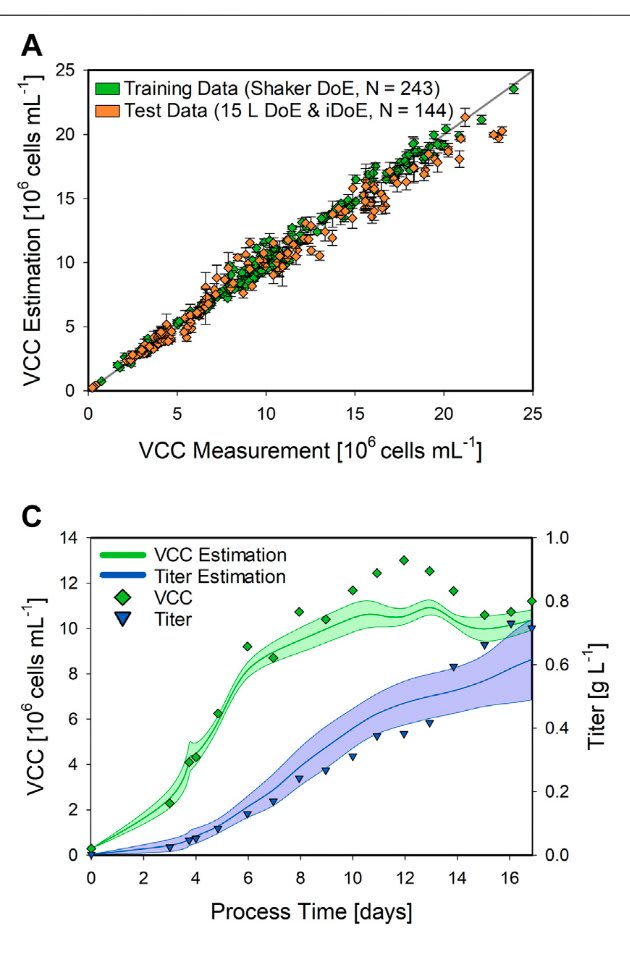

Another hybrid architecture surfaced the residual not as a hidden state, but as specific rate laws for cell growth and product formation. The upstream neural network propagated these laws through a set of mechanistic balance equations.

Despite training on a minimal data corpus from a 300mL shake flask, the hybrid setup transferred to a 15L stirred-tank bioreactor, predicting viable cell concentration and product titer with roughly 11% and 18% normalized error, respectively.

Requiring only modest recalibration, the model separated the residual, metabolic feature space from the governing hydrodynamics.

Modern hybrid approaches are beginning to speak operational language, boosting their industrial utility.

A recent model trained on proprietary CDMO data achieved an average prediction error of just 6% when forecasting end-of-cycle antibody yields on Day 16 using only data through Day 9. The engineers estimated that a one-week compression could expand their facility’s capacity from 18 to 26 runs per year, reducing variable costs by approximately 20% in turn.

And yet, there is an even loftier aspiration—the absorption of residual knowledge back into the governing physics.

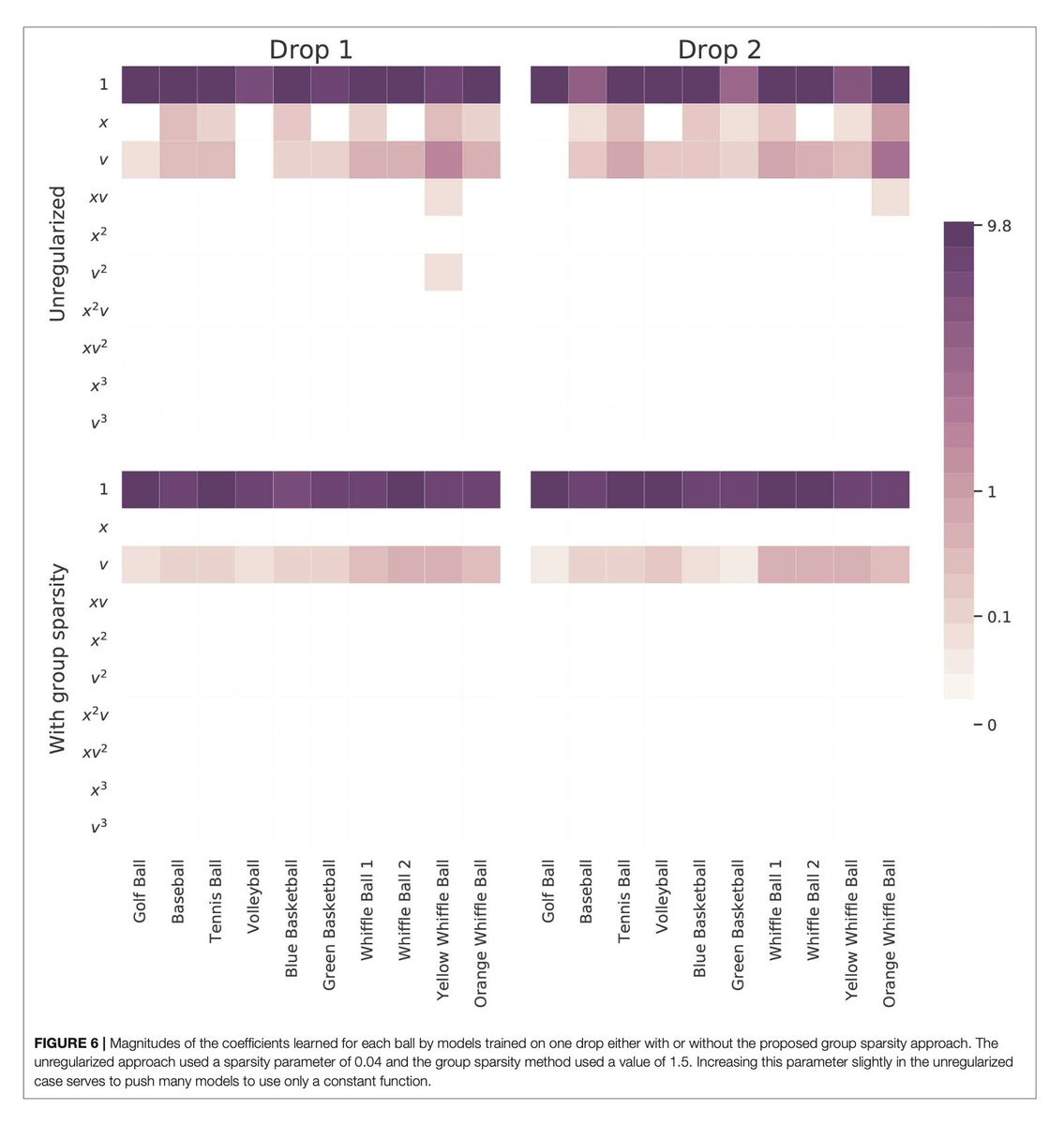

Techniques like sparse identification nonlinear dynamics (SINDy) recover governing equations from time-series data by reinforcing an inductive bias about equation structure, abrogating the neural network’s tendency to overfit to model noise. Applied to biology in implicit form, SINDy could learn Michaelis-Menten enzyme kinetics from process data.

Meanwhile, BuckiNet uses Buckingham’s own Pi theory as a constraint. By feeding this network a set of measured variables and their units, it learns which dimensionless groupings best collapse the data, similar to Reynolds’ invocation of ρUD/μ*.*

BuckiNet doesn’t eliminate the need to specify variables. Instead, it accelerates the combinatorial search over variable groups.

When the three tenets of similarity theory are destroyed, hybrid modeling offers a rebuttal.

Warning of Their Own Incompleteness

Hybrid modeling is not a panacea. It doesn’t fully restore the three conditions of similarity theory, but it does help bioprocess engineers work productively in their absence.

Enumerability — CHO systems can’t be enumerated in advance, violating Buckingham’s caveat. The clever use of latent placeholders like Φb is a tacit admission of this fact. Breathed into mathematical form and constrained by physics, these pseudo-residual correction terms absorb variables the engineer never named. But only if those variables left fingerprints in the training data.

Passivity — CHO cells aren’t like Reynolds’ passive fluids. They remember and adapt, outputting variables of their own making. By isolating and muting the known physics, hybrid models can better capture the subtle signatures of cellular history—metabolic switches, epigenetic drift, wear-and-tear. But the network’s portrait of cell behavior is only painted with the conditions it has seen.

Non-Sensitive Interdependence — CHO metabolism is riddled with sensitive couplings that outpace classical dimensional analysis. Hybrid architectures offer a partial viewport. By embedding governing ODEs, neural components’ learned corrections are tied to a physically valid manifold. But the model can still be wrong, conservation only guarantees the error is physically plausible.

We should be optimistic, but not hyperbolic.

No published study has yet demonstrated hybrid model extrapolation from bench to manufacturing scale (>1,000 L) for a CHO process. The evidence is encouraging at the scales where it does exist, however.

And even above roughly 200 L, new physical regimes can manifest, creating microenvironments that no bench-scale cell trajectory has experienced.

These are the Leviathans lying in wait, eager to test the resiliency of hybrid process modeling.

Act VIII — The Arsenal, Late Evening

Veneered with decades of dried pitch, the arsenalotti’s struts still lined the slipways, unexplained and enduring.

The pale marble statues stood as ghostly sentinels, their unblinking eyes cutting through the Arsenal’s suffocating calm. Long gone from the plaza was Galileo’s trio, his conduit for the primitives underlying similarity theory.

Veneered with decades of dried pitch, the arsenalotti’s struts still lined the slipways, unexplained and enduring.

The art of scaling, of applied ontology, has remained steadfast since the Arsenal’s cornerstone was set.

Human ambition drives similarity theory through leading and lagging epochs.

When it leads, we understand everything. Its light is a luminous sun, towards which industrialization blossoms. But its potency yields hubris. We forget to question what we don’t understand.

When it lags, we understand nothing. The torchlight is swallowed by the void’s maw, and what was once science transmogrifies into magic, conquerable only through esoteric ritual.

The tired aphorism that bioprocess scaling resembles alchemy is a transient cultural artifact of a lagging era.

Either biology-in-vessels belongs to some separate, irreducible category, or it does not.

I think it does not.

The shape of progress towards taming these systems is unknown. It is probably not linear, yet there aren’t enough proof points to assert the exponential.

The urgent need to meet disease with therapeutic abundance will fuel our resolve. With few tackling this space today, the low-hanging fruit may be closer than we think. The next leading epoch may not be far.

We must remain immune to arrogance, yet confident in the dark.